Sorting is a fundamental operation in coding, used in a wide range of applications from data analysis to search algorithms. While there are many sorting algorithms, each with its own strengths and weaknesses, some algorithms are more efficient than others for particular types of data. In this article, we will focus on the problem of sorting an array using minimum swaps.

Minimum Swaps to Sort An Array

Let's check the problem statement to understand it better: Given an array of n integers, find the minimum number of swaps required to sort the array in ascending order. For example, consider the array [4, 3, 2, 1]. The minimum number of swaps required to sort this array in ascending order is three: we can swap 4 with 1, 3 with 2, and 4 with 2.

Selection Sort

One approach to solving this problem is to use selection sort.

Selection sort is a simple sorting algorithm that works by repeatedly finding the smallest element from the unsorted part of the array and swapping it with the first element of the unsorted part. This process continues until the array is completely sorted.

To use selection sort to find the minimum number of swaps required to sort an array, we first create a copy of the array and sort it using selection sort. We then compare the original array with the sorted copy to determine the number of swaps required to convert the original array into the sorted copy.

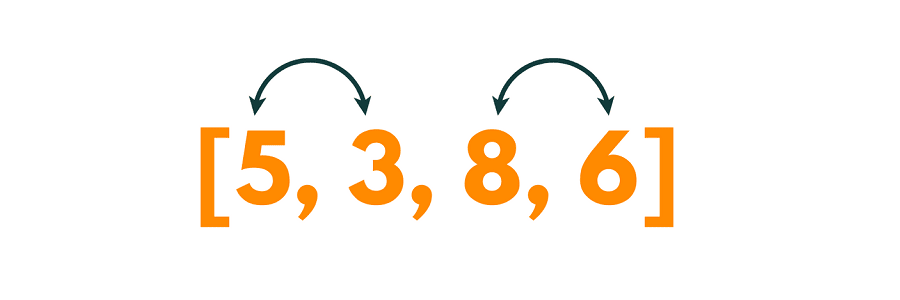

Let's consider an example to understand this approach better. Consider the following unsorted array:

We create a copy of the array and sort it using selection sort:

We can then compare the two arrays to determine the number of swaps required. Starting from the first element of the original array, we find the position of the corresponding element in the sorted array. In this case, the first element of the original array is 5, and its position in the sorted array is 2.

Since these two elements are not in their correct positions, we need to swap them. After the first swap, the array becomes:

Next, we move on to the second element of the original array, which is 3. Its correct position in the sorted array is 0. Since these two elements are not in their correct positions, we need to swap them. After the second swap, the array becomes:

`Finally, we move on to the third element of the original array, which is 8. Its correct position in the sorted array is 3. Since these two elements are already in their correct positions, we don't need to perform any swaps.

Therefore, the minimum number of swaps required to sort the array is 2.

Selection sort has a time complexity of O(n^2) and a space complexity of O(1), making it suitable for small arrays. However, for larger arrays, selection sort can be inefficient. In addition, selection sort does not take advantage of any pre-existing order in the array, which can result in a worst-case time complexity of O(n^2).

Overall, it can be used to find the minimum number of swaps required to sort an array in a non-decreasing order. However, it is not the most efficient algorithm for large arrays and does not take advantage of any pre-existing order in the array. Other sorting algorithms such as quicksort or mergesort may be more suitable for larger arrays.

Graph Theory

Specifically, we can model the array as a directed graph and use graph theory algorithms to find the minimum number of swaps required to sort it.

For example, consider the array A = [5, 3, 8, 6]. The minimum number of swaps required to sort this array in non-decreasing order is two: we can swap the first and second elements to get A = [3, 5, 8, 6], and then swap the third and fourth elements to get A = [3, 5, 6, 8].

To solve this problem using graph theory, we can represent the array A as a directed graph G = (V, E), where V is the set of nodes and E is the set of directed edges. We create a node for each element in the array and connect each node to all nodes representing larger elements. In other words, if A[i] > A[j], we add a directed edge from node i to node j.

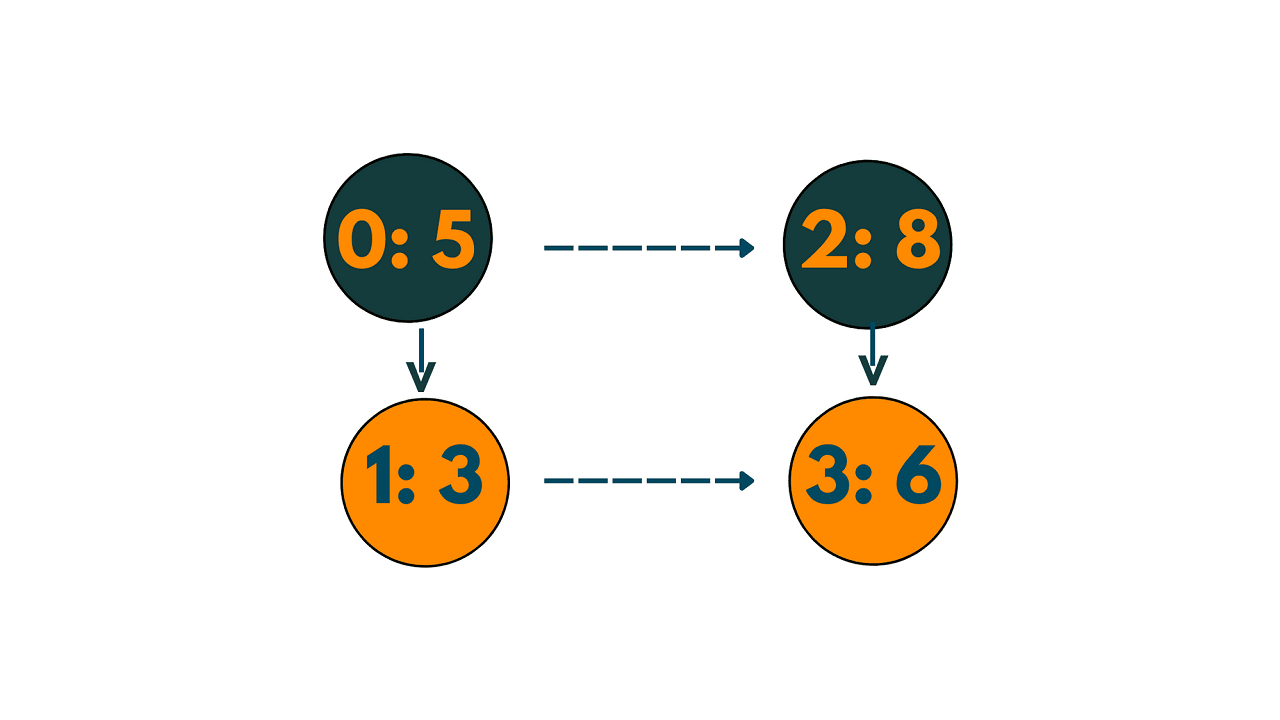

For example, the array A = [5, 3, 8, 6] corresponds to the graph shown below:

In this graph, there is a directed edge from node 0 to node 2 because A[0] = 5 is larger than A[2] = 8. Similarly, there is an edge from node 1 to node 3 because A[1] = 3 is smaller than A[3] = 6.

Once we have created graph G, we can find the minimum number of swaps required to sort the array by finding the length of the longest path in G. This is because each edge in the path corresponds to a swap between two adjacent elements in the array.

To find the longest path in the graph G, we can use dynamic programming. We create an array L of length n, where L[i] represents the length of the longest path starting at node i. We then iterate over the nodes in reverse order, updating L[i] as follows:

for i = n-1 to 0 L[i] = 1 for j in neighbors of i L[i] = max(L[i], L[j]+1)

The outer loop iterates over the nodes in reverse order, while the inner loop iterates over the neighbors of node i. If there is an edge from node i to node j, we update L[i] to be the maximum of its current value and L[j]+1, where L[j]+1 represents the length of the longest path starting at j plus one for the edge from i to j.

Finally, the length of the longest path in Graph G is the minimum number of swaps required to sort the array in a non-decreasing order.

Here is the full C++ code for finding the minimum number of swaps required to sort an array using graph theory:

#include<iostream> #include<vector> #include<algorithms> using namespace std; int minimumSwaps(vector<int>& arr) { int n = arr.size(); vector<int> sortedArr(arr); sort(sortedArr.begin(), sortedArr.end()); vector<bool> visited(n, false); int swaps = 0; for (int i = 0; i < n; i++) { if (visited[i] || arr[i] == sortedArr[i]) { continue; } int j = i; int cycle_size = 0; while (!visited[j]) { visited[j] = true; j = find(sortedArr.begin(), sortedArr.end(), arr[j]) - sortedArr.begin(); cycle_size++; } swaps += cycle_size - 1; } return swaps; } int main() { vector<int> arr = {5, 3, 8, 6}; int swaps = minimumSwaps(arr); cout << "Minimum number of swaps required to sort the array: " << swaps << endl; return 0; }

Output:

Minimum number of swaps required to sort the array: 2

The function minimumSwaps takes an input array arr and returns the minimum number of swaps required to sort it.

In this code, we first create a sorted copy of the input array using the sort function. We then initialize a boolean vector visited to keep track of visited indices, and a variable swaps to count the number of swaps required to sort the array.

We then iterate through the array using a for loop. If the current element is already visited or already in the correct position, we continue to the next iteration. Otherwise, we start a new cycle by initializing a variable j to the current index i.

We then keep following the cycle until we encounter an already visited index, marking each visited index along the way. We do this by setting the index j to the position of the element in the sorted array, which we find using the find function. We also increment the variable cycle_size for each visited index.

Once we have completed the cycle, we add the number of swaps required to move the cycle elements into their correct position to the swaps variable.

Finally, we return the total number of swaps required.

The time complexity of the above code is O(n log n), where n is the size of the input array. The space complexity of the above code is O(n).

Minimum Swaps to Sort Array in Descending Order

Similarly, we can use the graph theory to find the minimum number of swaps to sort an array in descending order.

Here is a C++ code for finding the minimum number of swaps required to sort an array in descending order using graph theory:

#include<iostream> #include<vector> #include<algorithms> using namespace std; int minimumSwaps(vector<int>& arr) { int n = arr.size(); vector<int> sortedArr(arr); sort(sortedArr.rbegin(), sortedArr.rend()); vector<bool> visited(n, false); int swaps = 0; for (int i = 0; i < n; i++) { if (visited[i] || arr[i] == sortedArr[i]) { continue; } int j = i; int cycle_size = 0; while (!visited[j]) { visited[j] = true; j = find(sortedArr.begin(), sortedArr.end(), arr[j]) - sortedArr.begin(); cycle_size++; } swaps += cycle_size - 1; } return swaps; } int main() { vector<int> arr = {5, 3, 8, 6}; int swaps = minimumSwaps(arr); cout << "Minimum number of swaps required to sort the array in descending order: " << swaps << endl; return 0; }

Output:

Minimum number of swaps required to sort the array in descending order: 2

The code is almost identical to the previous one, with the only difference being that we sort the sortedArr vector in descending order using sort(sortedArr.rbegin(), sortedArr.rend()). This sorts the array in descending order instead of ascending order.

The time and space complexity of this algorithm is identical to the previous one, which is O(n^2) and O(n), respectively.

Minimum Swaps to Sort an Array with Duplicates

To find the minimum number of swaps required to sort an array with duplicates, we can modify the previous algorithms slightly. Here is a C++ code for finding the minimum number of swaps required to sort an array with duplicates:

#include<iostream> #include<vector> #include<algorithms> #include<climits> #include<unordered_map> using namespace std; int minimumSwaps(vector<int>& arr) { int n = arr.size(); vector<int> sortedArr(arr); sort(sortedArr.begin(), sortedArr.end()); unordered_map<int, int> pos; for (int i = 0; i < n; i++) { pos[sortedArr[i]] = i; } vector<bool> visited(n, false); int swaps = 0; for (int i = 0; i < n; i++) { if (visited[i] || arr[i] == sortedArr[i]) { continue; } int j = i; int cycle_size = 0; int cycle_min = INT_MAX; while (!visited[j]) { visited[j] = true; cycle_min = min(cycle_min, arr[j]); j = pos[cycle_min]; cycle_size++; } swaps += cycle_size - 1; } return swaps; } int main() { vector<int> arr = {5, 3, 8, 6, 8}; int swaps = minimumSwaps(arr); cout << "Minimum number of swaps required to sort the array: " << swaps << endl; return 0; }

Output:

Minimum number of swaps required to sort the array: 2

The code is similar to the previous one, with the addition of an unordered map pos that stores the positions of each element in the sorted array. We also initialize a variable cycle_min to keep track of the minimum element in the current cycle.

In the for loop, we iterate through the input array and initialize cycle_min to the maximum integer value. We then follow the cycle until we encounter an already visited index, marking each visited index along the way.

We update cycle_min to the minimum element in the current cycle and set the index j to the position of the new cycle_min in the sorted array using the pos map. We also increment the variable cycle_size for each visited index. Once we have completed the cycle, we add the number of swaps required to move the cycle elements into their correct position to the swaps variable.

The time complexity of this algorithm is O(nlogn) due to the sorting step and the use of an unordered map, which has an average time complexity of O(1). The space complexity of the algorithm is O(n) due to the use of additional vectors and maps.

Conclusion

In this article, we discussed in detail the minimum swaps to sort an array using Selection Sort and Graph Theory with implementation in C++. If you still have doubts, connect with our DSA Experts Online for more help.