Discovering hidden patterns and finding solutions to challenging problems are two fascinating aspects of mathematics and programming. One such important concept is the perfect squares. In this article, we will explore the fascinating problem of finding the least number of perfect squares that sum up to a given number using recursion and dynamic programming.

What is a Perfect Squares Problem?

A perfect square is a number that can be expressed as the product of an integer multiplied by itself.

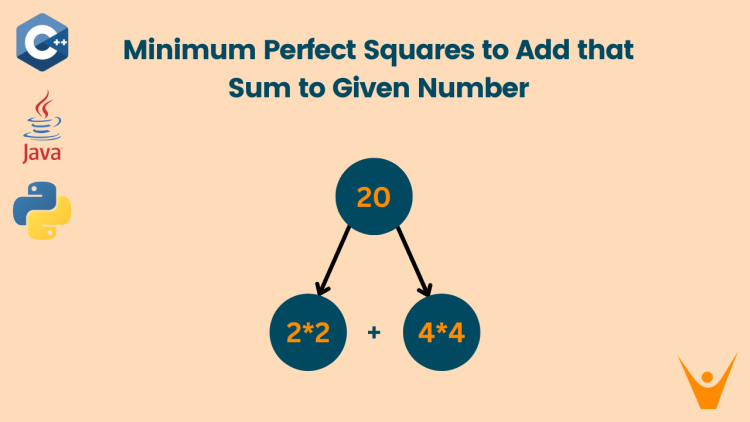

In the Perfect Squares problem, we are given a number n and we have to find the goal to find the minimum number of perfect square numbers (numbers that are squares of integers) whose sum is equal to a given number n.

Let us take an example to understand clearly:

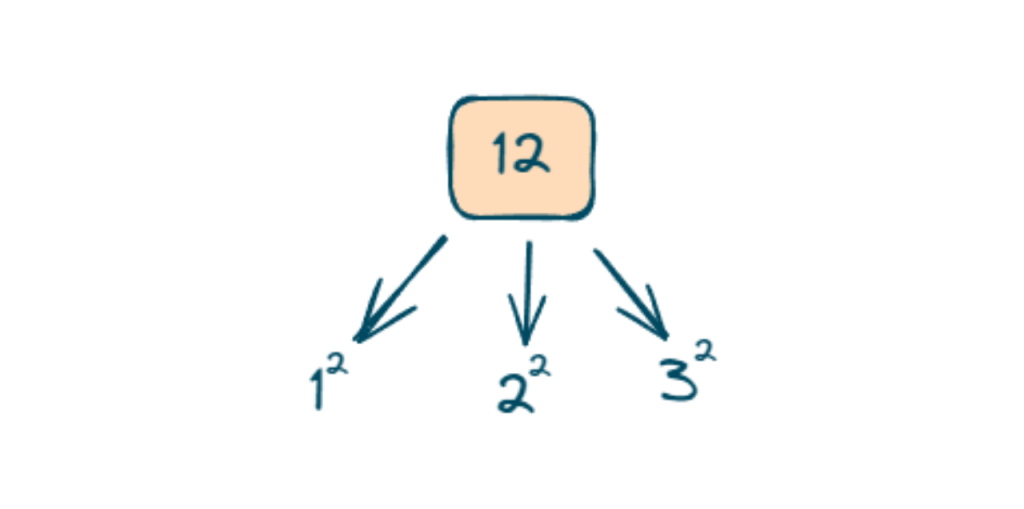

Suppose N = 12

12 can be obtained in many ways by adding perfect square numbers but the best way to make 12 by adding a minimum perfect square number is (4+4+4).

12 can be obtained by adding the perfect square number till 32 because 32 = 9 (9<12) and 42 = 16 (16>12) so we cannot add 42 to make 12

So 12 can only be obtained by using addition on 12, 22, 32.

Recursive Approach

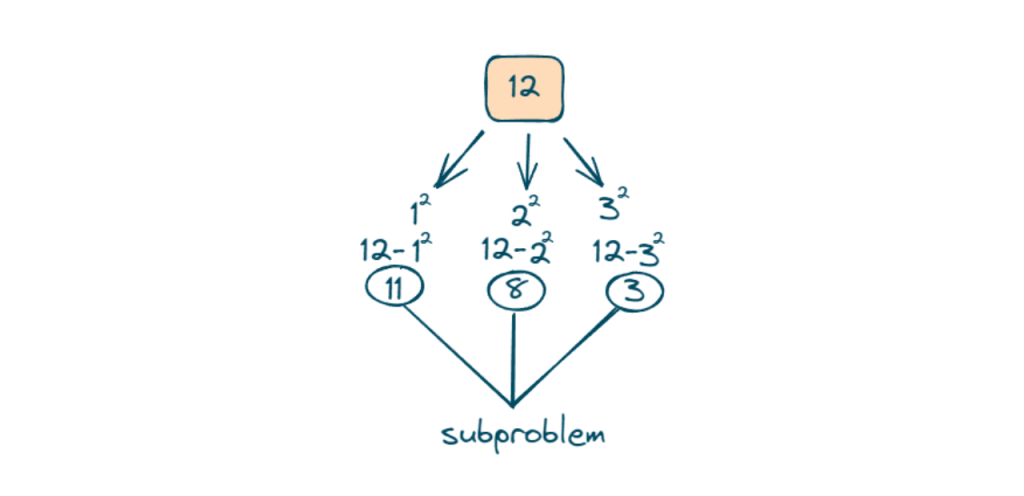

A recursive approach to solving the perfect squares problem involves defining a recursive function that calculates the minimum number of perfect square numbers needed to sum up to a given number n. The function will have a base case and make recursive calls to smaller subproblems:

- We will create a function that checks whether n is a perfect square.

- The function makes recursive calls for each possible perfect square less than or equal to n.

- The base case checks if n itself is a perfect square. If it is, the function returns 1, indicating that it’s already a perfect square.

- The result is updated by choosing the minimum of the current value and the result of the recursive call for the subproblem (n – i*i).

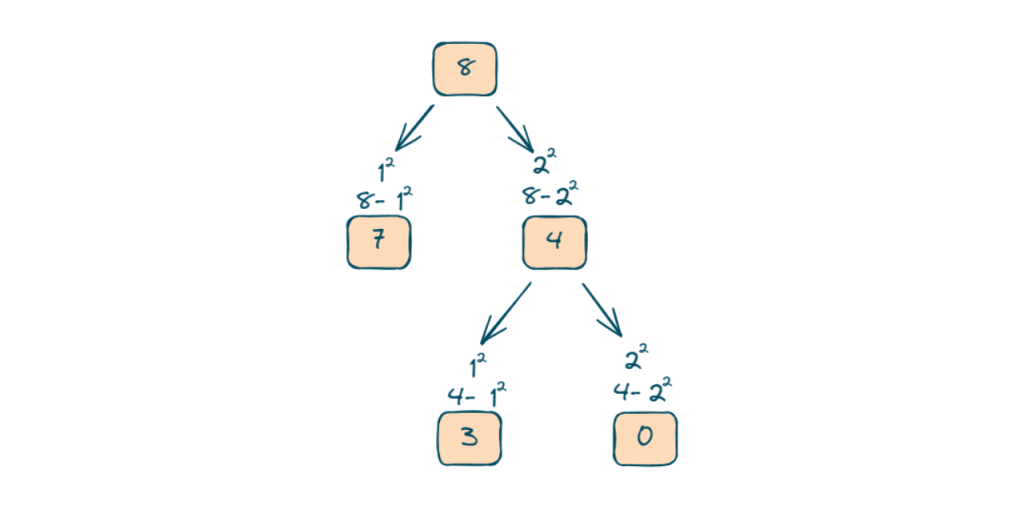

We will further explore and store the minimum in each step and in this case, we will explore 8.

The time complexity is exponential because, in the worst case, the function explores all possible perfect squares less than or equal to n, and for each of these squares, it makes a recursive call. The number of possible perfect squares explored is roughly n and the depth of the recursive tree can go up to n therefore the overall time complexity is O(n^n).

Dynamic Programming Approach

Dynamic programming is a powerful technique that can be applied to solve various optimization problems. In finding the least number of perfect squares, we can utilize dynamic programming to compute the solution efficiently. In this approach,

- We will create an array, dp[], where dp[i] represents the minimum number of perfect squares required to achieve the sum i.

- We initialize all elements of dp[] to a maximum value to signify that they are yet to be computed and Initialize the table with base cases. For example, the minimum number of perfect squares needed to sum up to 0 is 0 (no squares needed). The minimum number for 1 is 1, as 1 itself is a perfect square.

- We will iterate through all numbers from 1 to n and update the dp[] array based on the minimum sum achievable. By considering different combinations of perfect squares,

- We can gradually build up the solution until we reach the desired number. Finally, we return dp[n] as the minimum number of perfect squares required to sum up to n.

C++ Code

Here is the C++ program to solve the ‘Perfect Squares’ problem using dynamic programming approach:

#include<bits/stdc++.h>

class Solution {

public:

int numSquares(int n) {

// Create a vector to store the minimum number of perfect squares required for each number up to n.

std::vector<int> dp(n + 1, INT_MAX);

// Initialize base cases

dp[0] = 0;

dp[1] = 1;

// Build up the dp table

for (int i = 2; i <= n; ++i) {

for (int j = 1; j * j <= i; ++j) {

dp[i] = std::min(dp[i], dp[i - j * j] + 1);

}

}

// Return the result from the last cell of the dp table

return dp[n];

}

};

int main() {

Solution solution;

int n = 12;

int result = solution.numSquares(n);

std::cout << "The minimum number of perfect squares needed to sum to " << n << " is: " << result << std::endl;

return 0;

}Java Code

We can also solve the same programming program in Java:

import java.util.Arrays;

class Solution {

public int numSquares(int n) {

// Create an array to store the minimum number of perfect squares required for each number up to n.

int[] dp = new int[n + 1];

Arrays.fill(dp, Integer.MAX_VALUE);

// Initialize base cases

dp[0] = 0;

dp[1] = 1;

// Build up the dp table

for (int i = 2; i <= n; ++i) {

for (int j = 1; j * j <= i; ++j) {

dp[i] = Math.min(dp[i], dp[i - j * j] + 1);

}

}

// Return the result from the last cell of the dp table

return dp[n];

}

public static void main(String[] args) {

Solution solution = new Solution();

int n = 12;

int result = solution.numSquares(n);

System.out.println("The minimum number of perfect squares needed to sum to " + n + " is: " + result);

}

}Python Code

The dynamic programming approach to solve the ‘Perfect Squares’ leetcode problem can easily be implemented in Python as well:

class Solution:

def numSquares(self, n: int) -> int:

# Create a list to store the minimum number of perfect squares required for each number up to n.

dp = [float('inf')] * (n + 1)

# Initialize base cases

dp[0] = 0

dp[1] = 1

# Build up the dp table

for i in range(2, n + 1):

j = 1

while j * j <= i:

dp[i] = min(dp[i], dp[i - j * j] + 1)

j += 1

# Return the result from the last cell of the dp table

return dp[n]

# Test the solution

solution = Solution()

n = 12

result = solution.numSquares(n)

print(f"The minimum number of perfect squares needed to sum to {n} is: {result}")Output:

The minimum number of perfect squares needed to sum to 12 is: 3The time complexity for this approach is O(n*sqrt n) and the space complexity is O(n).

Comparison of Both Approaches

Let’s see a quick review of both the methods:

| Approach | Time Complexity | Space Complexity | Details |

| Recursive Approach | O(n)n | O(1) | It explores all possible perfect squares less than or equal to n, and for each of these squares, it makes a recursive call. |

| Dynamic Programming Approach | O(n*sqrt n) | O(n) | The dynamic programming approach uses an array to store the result of the pre-computed problem. |

The dynamic programming approach is recommended for practical implementations, providing a good balance between time and space complexity.

Conclusion

For the perfect square problem, we have discussed two approaches. The recursive approach, while conceptually straightforward, is not practical for larger inputs due to its exponential time complexity. Dynamic programming approach significantly improves efficiency by using memoization to avoid redundant calculations, resulting in a polynomial time complexity.