AI Models cost a lot for the big tech companies. They require a lot of computing resources, significantly when solving complex problems. But a new method might solve this problem for them, called Chain of Draft. It can make AI reasoning models more efficient.

Chain of Draft Method for AI Reasoning

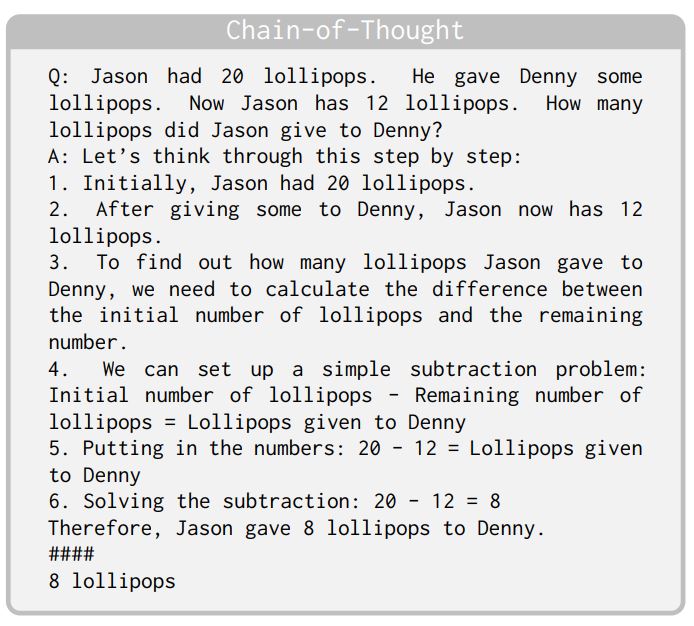

Traditional LLMs rely on the Chain-of-Thought (CoT) method. When you give a problem to the LLM, it will write out every step to solve it, even if the answer seems obvious. This means more tokens are used, and eventually, more processing power is required, increasing the costs. It also means the response time will increase.

Let’s take this sample problem: “Jason had 20 lollipops. He gave Denny some lollipops. Now Jason has 12 lollipops. How many lollipops did Jason give to Denny?“

Here’s how the CoT method will work:

Now, Zoom researchers have revealed a new way to solve these issues. Instead of every detail, let LLM focus on the essential reasoning.

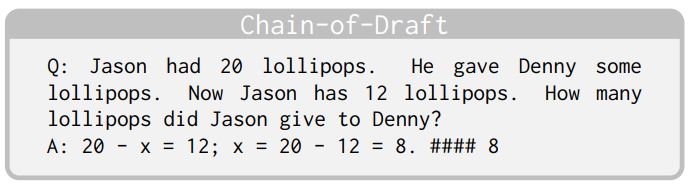

The research paper, titled “Chain of Draft: Thinking Faster by Writing Less”, explores a new approach called Chain of Draft (CoD). CoD mimics human cognitive processes by producing concise yet informative intermediate reasoning steps instead of lengthy explanations.

CoD draws inspiration from how humans think: rather than explaining every detail, it often summarizes key ideas in short, concise output at each step.

Here’s how CoD will work on the same problem:

The answer is the same, but CoD does it with far fewer words. In the research, the Chain of Draft method only uses 7.6% of the token when compared to the Chain of Thought method. This means tokens are saved, less computing power is used, and the response is faster.

Does it work?

Does the Chain of Thought process affect the accuracy? No, and sometimes the CoD is even better in performance. Using datasets like GSM8K, CoD maintained an accuracy in arithmetic reasoning. In commonsense reasoning, it outperformed the traditional method.

Overall, CoD had the same accuracy as detailed CoT but used up to 86% fewer words.

Chain of Draft improves efficiency by shortening reasoning steps, reducing both input and output tokens. While CoD isn’t ideal for all tasks, some require deeper reasoning or external knowledge, it can be combined with methods like adaptive parallel reasoning for better results.

However, there are some limitations as well. Certain reasoning tasks, especially those needing multi-step verification or deep contextual understanding, may suffer from CoD’s shorter intermediate steps, leading to potential accuracy trade-offs.

Then, by focusing on conciseness, CoD removes less relevant details, which could be problematic in scenarios where nuanced reasoning is necessary.

Like any experiment, this one also had certain restrictions. It struggles in zero-shot scenarios, where no example solutions are provided beforehand. Researchers found that without a few-shot prompt to guide it, CoD’s effectiveness drops significantly.

For instance, when tested with Claude 3.5 Sonnet, CoD improved accuracy by only 3.6%. Additionally, the expected token savings were far less noticeable.

Why does this happen? The researchers believe it’s because CoD-style concise reasoning isn’t common in the training data of LLMs. Without examples to follow, AI struggles to generate efficient, insightful drafts on its own.

Takeaways

The Chain of Draft method not only makes the LLM responses snappier. The compact reasoning approach could inspire new strategies for training AI models with concise data and enhancing efficiency. Meta recently talked about a Multi-token prediction to speed up LLMs. Microsoft also released LLMLingua 2, a novel approach for prompt compression.

In many applications, speed is just as important as accuracy. By teaching machines to think more like humans, we’re making AI smarter.